LLM Provider Setup

Configure your AI language model provider using either the web interface or CLI. All providers use the same simple setup process.

⏱️ 2-5 minutes

📚 Beginner

🔧 Configuration

⚡ Two Ways to Setup

Choose your preferred method to configure LLM providers:

Web Interface (Recommended)

Visual interface with forms and validation

Steps:

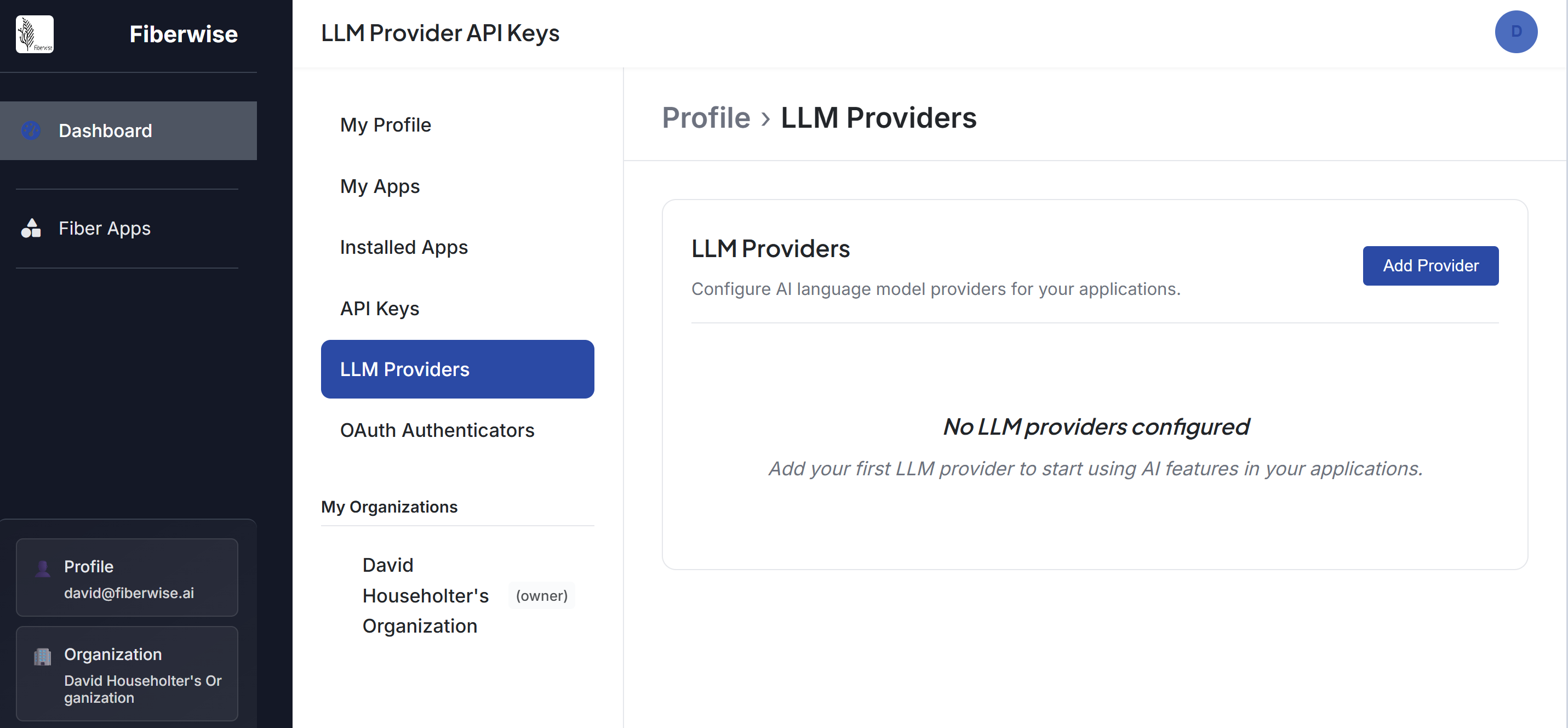

- Navigate to your Fiberwise instance

- Go to Settings → LLM Providers

- Click "Add Provider"

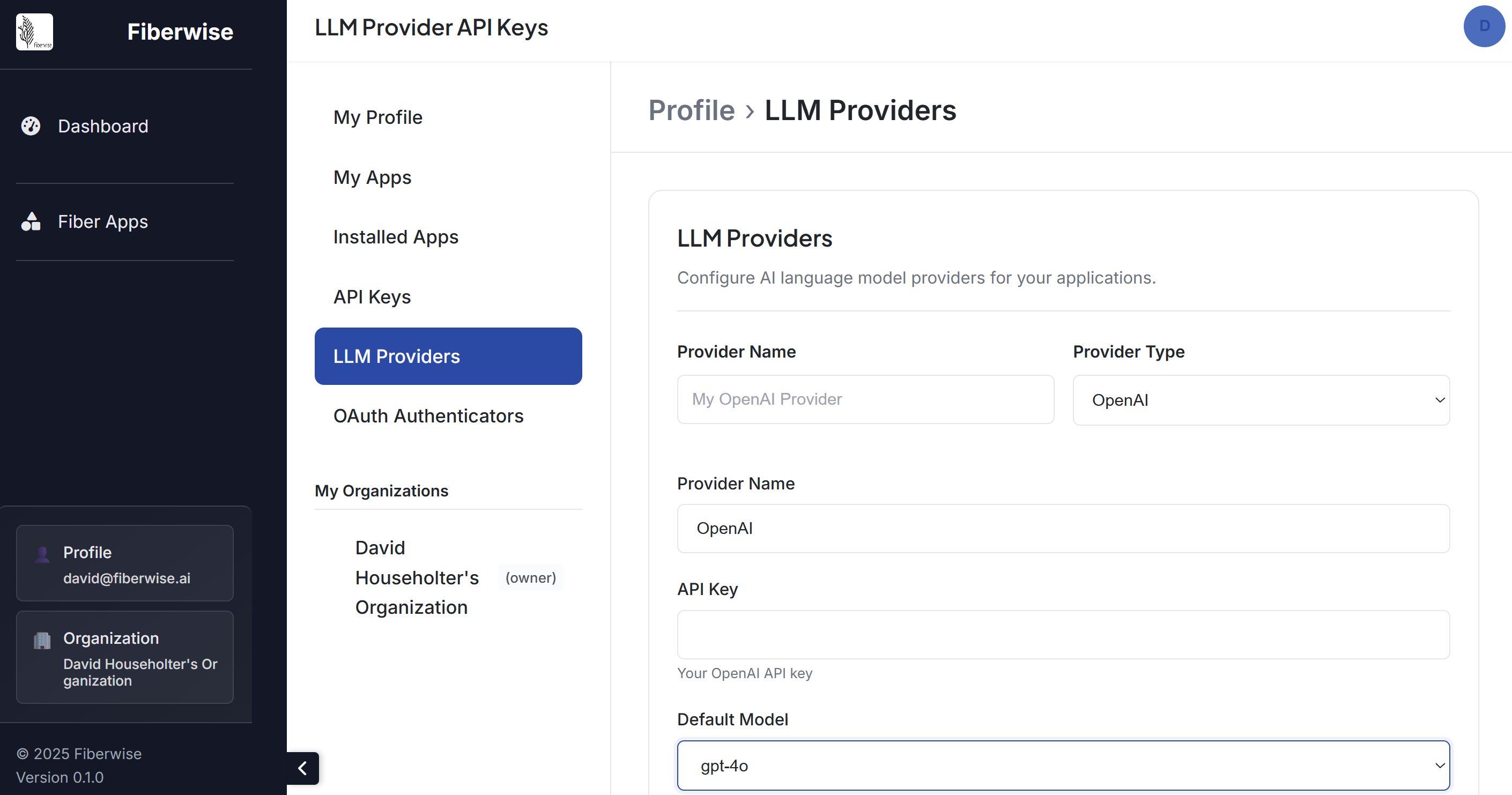

- Select your provider type and enter your API key

- Test the connection and save

Add your provider details:

Command Line Interface

Quick setup via terminal commands

Universal Command for All Providers:

# Add any LLM provider with a single command

fiber llm add-provider --provider [PROVIDER_NAME] --api-key [YOUR_API_KEY]

# Examples for different providers:

fiber llm add-provider --provider openai --api-key sk-...

fiber llm add-provider --provider anthropic --api-key sk-ant-...

fiber llm add-provider --provider google --api-key AI...

fiber llm add-provider --provider cohere --api-key co-...

# Set as default provider

fiber llm set-default --provider openai🚀 Next Steps

Now that your LLM provider is configured, you're ready to build AI applications!